People Have the Right to Refuse AI

Britt Paris / Mar 5, 2026Britt Paris is the author of Radical Infrastructure: Imagining the Internet from the Ground Up, a new book published by the University of California Press.

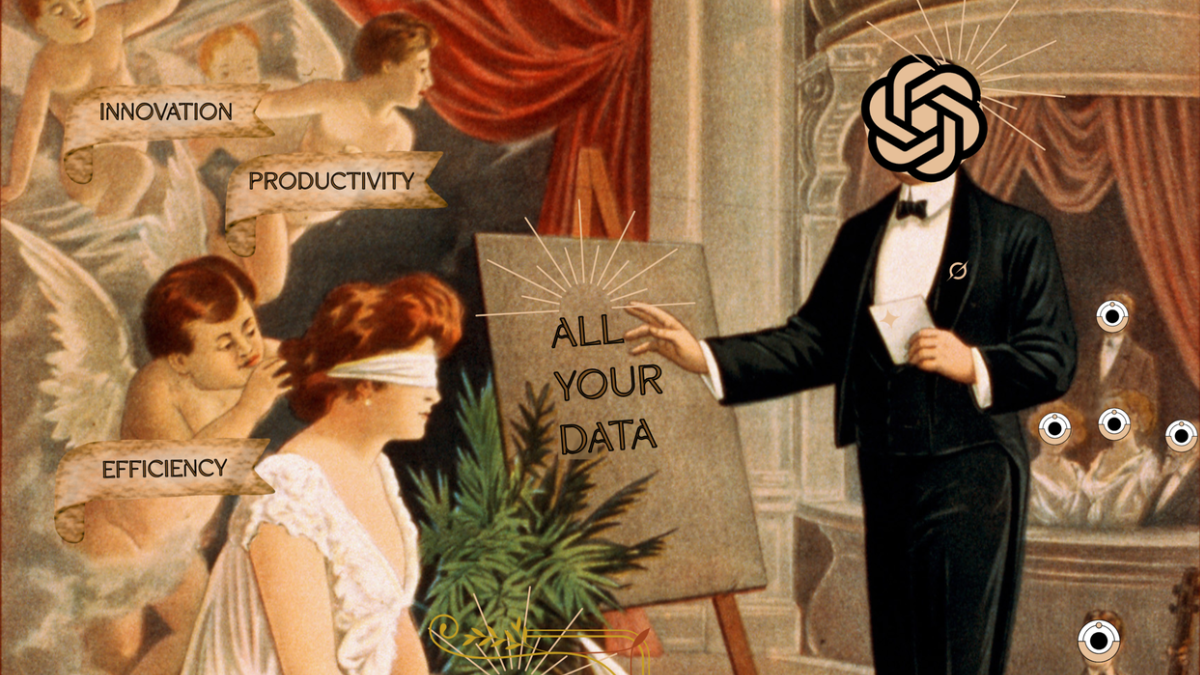

The AI-Deal by Daniela Zampieri / Better Images of AI / CC by 4.0

Financial forecasters are warning of an AI bubble, but that hasn’t dampened business decision-makers’ credulity for the unproven technology. Last month, investors dropped software stocks after Anthropic touted that its Claude model could write code, even as Big Tech companies double down on a massive AI capital spending spree.

At the same time, boosters in higher education insist that AI is inevitable for the future of work, entering multi-million-dollar contracts with large institutions even as students deride AI outputs and the people who make them as “clankers,” and a main purveyor, OpenAI, admits it is still trying to understand what the use case is for education. Users are finding it difficult to turn AI off in email and search engines, and this year, Superbowl ads—which have long been used to hype up flailing industries—focused heavily on AI, sports betting apps, and cryptocurrency. Meanwhile, more dystopian applications are emerging, including ICE’s use of Palantir’s data aggregation and recommendation systems in its ELITE targeting system. 404 Media reported that, in court testimony, a deportation officer with ICE’s Fugitive Operations Unit likened the app to Google Maps, but for detention.

If AI is inevitable, why is it being shoved down our throats? What options do we have?

Countering the ‘inevitability’ narrative

To investors, the inevitability frame proffered by boosters represents endless opportunity with no downside: AI’s future utility never comes, because it’s always just around the corner. The appeal of this unknowability—the same mechanism used to fuel the dot-com boom (and bust), to lock in support for the auto industry’s use of oil, even to stimulate the development of railroads—has provided the bones, both material and conceptual, that structure the Internet, the grid, and the gasoline that powers data center turbines. All of these immense projects required resources and credulity at scale. To get them built, their boosters lied, cheated, and stole from regular people. AI’s hype model is no different.

The strategies of those hyping AI are not subtle. For instance, consider the promises of Silicon Valley doomers who speculate that AGI will arrive by 2027, and that superintelligence will arrive the following year. More recently, we were treated to a flurry of commentary about Moltbook, which pretends to be a social network for autonomous AI agents but is actually just an advertising showcase featuring LLMs regurgitating conversationally-flavored text. Such projects are cited to argue that transformative AI is coming. Even those who offer a grim future for humanity with AGI suggest that the best we can do is acquiesce to the idea of AGI; if you don’t want it to be mad at you, you’d better start paying for a product that barely works—or, better yet, give it your credit card information so it can start paying itself.

The rich get richer while we pay the costs

What these narratives leave out is the fact that the AI boom is just the most recent example of tech companies trying to make a buck off the data they have been tracking since the advent of social media. It’s an investment they have never been able to morally justify, forcing them to turn to ever-more fanciful use cases: the promise of AGI, curing diseases, reimagining education, and replacing entire workforces. AI promises the world to CEOs, investors, and the credulous public, and while these promises are wielded as cudgels against would-be critics, they also function as bluffs to ensure the uninterrupted swelling of share prices—until, suddenly, they don’t.

Indeed, amid competing prognostications about an approaching AI bubble, it is easy to lose sight of the fact that the technology’s limitless hype is already an insulting mockery of its material costs. At present, AI requires massive amounts of rare earth minerals, energy, water, computational power, and human guidance to work as poorly as it does. This brittle infrastructure is what makes AI so dangerous for the economy. Bubbles burst, and when they do, they don’t take down their powerful backers; they take down regular people—in this case, those who don’t know their pensions are invested in AI, that organizations that have come to rely on these technologies, and the institutions that have bet big on them at the cost of everything else.

You can choose not to be a mark

But you don’t have to participate in AI’s massive inflation of hype. You can refuse to be enthralled by Moltbook or to believe every piece published by boosters in the pages of booster-owned media. AI’s ubiquity is not inevitable if enough of us don’t engage—or if we conscientiously divest.

There are many visions of a future where AI does not exist, or at least does not hasten the planet’s immiseration. There are models like small-scale cooperative technology and workers’ collectives that guide technology—the People’s AI Action Plan is one. And though this relatively uncontroversial vision for AI requires mobilizing masses of people, workers, labor organizations, community groups, and political apparatuses, simply saying no to the technology is another viable option. In any case, a better future requires bringing these fragments together to build a world worth fighting for. Refusing the ‘inevitable’ is the first step to action.

Authors