Why Congress Should Step Into the Anthropic-Pentagon Dispute

Daniel Castro / Feb 26, 2026Daniel Castro is vice president at the Information Technology and Innovation Foundation (ITIF) and director of ITIF’s Center for Data Innovation. ITIF is a nonprofit, nonpartisan research and educational institute whose supporters include corporations, charitable foundations and individual contributors.

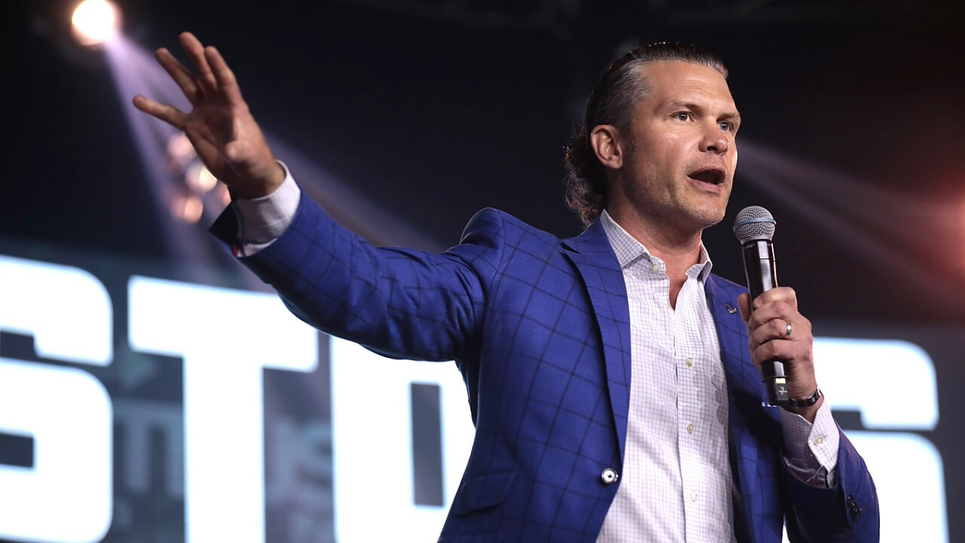

Pete Hegseth, now the US Secretary of Defense, speaks at a summit in Nashville, Tennessee in May 2023. (Source)

A simmering dispute between the Department of Defense and Anthropic raises an uncomfortable but important question: who gets to set the guardrails for military use of artificial intelligence — the executive branch, private companies or Congress and the broader democratic process?

According to media reports, Defense Secretary Pete Hegseth gave Anthropic CEO Dario Amodei a Friday deadline to make its AI systems available for unrestricted military use or risk losing its contract.

Anthropic has reportedly refused to cross two lines: allowing its models to be used for domestic surveillance of United States citizens and enabling fully autonomous military targeting. Hegseth has objected to what he has described as “ideological constraints” embedded in commercial AI systems, arguing that determining lawful military use should be the government’s responsibility — not the vendor’s. As he put it in a speech at Elon Musk’s SpaceX last month, “We will not employ AI models that won't allow you to fight wars.”

Stripped of rhetoric, this dispute resembles something relatively straightforward: a procurement disagreement.

In a market economy, the US military decides what products and services it wants to buy. Companies decide what they are willing to sell and under what conditions. Neither side is inherently right or wrong for taking a position. If a product does not meet operational needs, the government can purchase from another vendor. If a company believes certain uses of its technology are unsafe, premature or inconsistent with its values or risk tolerance, it can decline to provide them. For example, a coalition of companies have signed an open letter pledging not to weaponize general-purpose robots. That basic symmetry is a feature of the free market.

Where the situation becomes more complicated — and more troubling — is in the reported threats to designate Anthropic a “supply chain risk” and invoke the federal Defense Production Act to expand government authority over the company’s products. Those tools exist for specific purposes: addressing genuine national security vulnerabilities and mobilizing industry in times of emergency. They are not intended to force a company to accept contractual terms it has rejected.

Using these authorities in that manner would mark a significant shift — from procurement disagreement to the use of coercive leverage. Labeling a company a supply chain risk would likely compel other parts of the federal government to suspend or terminate their use of its services, a consequence that extends far beyond the loss of a single Defense Department contract.

It is also important to distinguish between the two substantive issues Anthropic has reportedly raised.

The first, opposition to domestic surveillance of U.S. citizens, touches on well-established civil liberties concerns. The US government operates under constitutional constraints and statutory limits when it comes to monitoring Americans. A company stating that it does not want its tools used to facilitate domestic surveillance is not inventing a new principle; it is aligning itself with longstanding democratic guardrails.

To be clear, the Pentagon is not affirmatively asserting that it intends to use the technology to surveil Americans unlawfully. Its reported position is that it does not want to procure models with built-in restrictions that preempt otherwise lawful government use. In other words, the Department of Defense argues that compliance with the law is the government’s responsibility — not something that needs to be embedded in a vendor’s code.

Anthropic, for its part, has invested heavily in training its systems to refuse certain categories of harmful or high-risk tasks, including assistance with surveillance. The disagreement is therefore less about current intent than about institutional control over constraints: whether they should be imposed by the state through law and oversight, or by the developer through technical design.

The second issue, opposition to fully autonomous military targeting, is more complex.

The Department of Defense already maintains policies requiring human judgment in the use of force, and debates over autonomy in weapons systems are ongoing within both military and international forums. A private company may reasonably determine that its current technology is not sufficiently reliable or controllable for certain battlefield applications. At the same time, the military may conclude that such capabilities are necessary for deterrence and operational effectiveness.

Reasonable people can disagree about where those lines should be drawn.

But that disagreement underscores a deeper point: the boundaries of military AI use should not be settled through ad hoc negotiations between a Cabinet secretary and a CEO. Nor should they be determined by which side can exert greater contractual leverage.

If the US government believes certain AI capabilities are essential to national defense, that position should be articulated openly. It should be debated in Congress, and reflected in doctrine, oversight mechanisms and statutory frameworks. The rules should be clear — not only to companies, but to the public.

The US often distinguishes itself from authoritarian regimes by emphasizing that power operates within transparent democratic institutions and legal constraints. That distinction carries less weight if AI governance is determined primarily through executive ultimatums issued behind closed doors.

There is also a strategic dimension. If companies conclude that participation in federal markets requires surrendering all deployment conditions, some may exit those markets. Others may respond by weakening or removing model safeguards to remain eligible for government contracts. Neither outcome strengthens US technological leadership.

The Pentagon is correct that it cannot allow potential “ideological constraints” to undermine lawful military operations. But there is a difference between rejecting arbitrary restrictions and rejecting any role for corporate risk management in shaping deployment conditions. In high-risk domains — from aerospace to cybersecurity — contractors routinely impose safety standards, testing requirements and operational limitations as part of responsible commercialization. AI should not be treated as uniquely exempt from that practice.

Moreover, built-in safeguards need not be seen as obstacles to military effectiveness. In many high-risk sectors, layered oversight is standard practice: internal controls, technical fail-safes, auditing mechanisms and legal review operate together. Technical constraints can serve as an additional backstop, reducing the risk of misuse, error or unintended escalation.

The Pentagon should retain ultimate authority over lawful use. But it need not reject the possibility that certain guardrails embedded at the design level could complement its own oversight structures rather than undermine them. In some contexts, redundancy in safety systems strengthens, not weakens, operational integrity.

At the same time, a company’s unilateral ethical commitments are no substitute for public policy. When technologies carry national security implications, private governance has inherent limits. Ultimately, decisions about surveillance authorities, autonomous weapons and rules of engagement belong in democratic institutions.

This episode illustrates a pivotal moment in AI governance. Frontier AI systems are now powerful enough to influence intelligence analysis, logistics, cyber operations and potentially battlefield decision-making. That makes them too consequential to be governed solely by corporate policy — and too consequential to be governed solely by executive discretion.

The solution is not to empower one side over the other. It is to strengthen the institutions that mediate between them.

Congress should clarify statutory boundaries for military AI use and investigate whether sufficient oversight exists. The Department of Defense should articulate detailed doctrine for human control, auditing and accountability. Civil society and industry should participate in structured consultation processes rather than episodic standoffs and procurement policy should reflect those publicly established standards.

If AI guardrails can be removed through contract pressure, they will be treated as negotiable. However, if they are grounded in law, they can become stable expectations.

Democratic constraints on military AI belong in statute and doctrine — not in private contract negotiations.

Authors