Transcript: Senate Hearing Uses Social Media Verdicts to Press the Case for KOSA

Ben Lennett / May 15, 2026

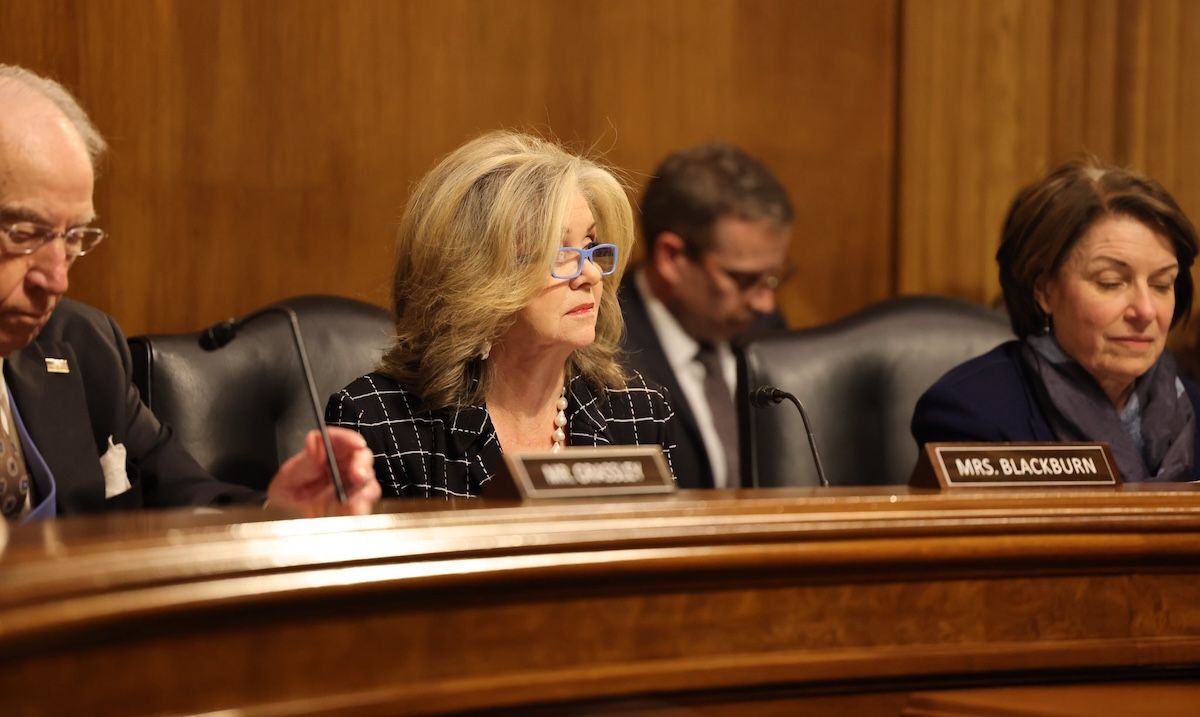

Sen. Marsha Blackburn (R-Tenn.) presides over the Senate Subcommittee on Privacy, Technology, and the Law hearing, “From the Courtroom to Congress: Why Landmark Social Media Verdicts Demand Federal Action to Protect Kids Online.” (May 13, 2026)

The Senate Subcommittee on Privacy, Technology, and the Law held a hearing on Wednesday, “From the Courtroom to Congress: Why Landmark Social Media Verdicts Demand Federal Action to Protect Kids Online.” The hearing comes on the heels of landmark verdicts in California and New Mexico, where juries found tech companies Google and Meta liable for social media-related harms affecting children.

Witnesses included Rachel Lanier, a trial attorney whose firm persuaded a jury in California to find that Meta and YouTube were negligent in deploying addictive design features that contributed to harming a young woman’s mental health. Lanier testified that tech companies operate an "attention economy," deliberately engineering features to act as "slot machines" that seek to maximize youth engagement and advertising revenue.

Lanier and lawmakers also discussed the internal corporate communications obtained during recent litigation, contrasting those documents with prior congressional testimony from technology executives, including Meta CEO, Mark Zuckerberg. Trial evidence indicated that Meta possessed internal data detailing the platforms' negative effects on teen mental health and acknowledging a significant presence of users under the age of 13, which contradicted public statements made by the company.

Survivor parents also provided accounts of the fatal consequences of the platform’s algorithms. Joanne Bogard recounted how her child died from attempting a viral choking challenge he had seen on YouTube. Bridget Noring testified that her son died from fentanyl-laced pills purchased via Snapchat.

The hearing also focused on legislation to protect children online. A major point of discussion was the proposed reform or total repeal of Section 230, which currently limits platforms' liability; with both lawmakers and advocates arguing that changing the law would increase accountability for tech companies. Law professor Mary Graw Leary testified that reactive litigation is insufficient and argued that proactive laws are needed to deter harmful corporate behavior.

Additionally, many committee members emphasized the importance of passing the Kids Online Safety Act (KOSA), a bipartisan bill that passed the Senate in 2024 but stalled in the House. The bill was reintroduced in the Senate last year, but despite having 75 co-sponsors, it has not advanced.

In her opening statement, Sen. Marsha Blackburn (R-Tenn.), chair of the subcommittee, said it was “up to Congress to prevent future harm to our children” and pass KOSA. Several lawmakers also called for the bill to be brought to the floor for a vote. Sen. Richard Blumenthal (D-Conn.) warned advocates not to accept the “watered-down shadow legislation,” currently moving through the House Energy Commerce Committee, with Sen. Katie Britt (R-Ala.) also urging her House colleagues to pass the Senate’s version.

Below is a lightly edited transcript of the hearing. Please refer to the official audio when quoting.

Sen. Marsha Blackburn (R-Tenn.):

Good afternoon. Thank you all for being here. Senator Klobuchar will be in the room in just a moment, and this subcommittee on privacy, technology and, the law will come to order and today we're going to examine the landmark verdicts out of Los Angeles and New Mexico that prove what so many of us have known for years, that social media companies intentionally designed their platforms to addict our children and to profit from our children. When our children are on those platforms, they are the product. For too long, families who sought justice were turned away at the courthouse doors as big tech CEOs hid behind the shield of Section 230. Parents could not get the accountability they deserved. We have held big tech CEOs in front of congressional committees and held hearings with courageous whistleblowers, like the hearing Senator Klobuchar and I held last year with two whistleblowers who provided testimony under oath that Meta suppressed child safety research and profited from what one user called The Pedophile Kingdom.

While big tech continues acting with impunity, parents and families have not stopped fighting for children across this entire nation. The tide is beginning to turn and these recent verdicts signal a shift. Courts are beginning to conclude that protecting our children online is not a content issue. Indeed, it is a platform design issue. These social media platforms are designed to capture our child's attention, maximize the engagement and profit off what I call their virtual you. And big tech has zero remorse for how this harms children. They have zero respect for the child. We will also hear about Mark Zuckerberg's testimony in the LA trial, and let's be clear, for years, Meta has done everything in its power to keep Mr. Zuckerberg from the witness stand and after his testimony in this case, we know why. He has lied to Congress for years, whether it was about Meta's goal to increase screen time or about Meta's own internal research showing that there was a negative correlation between social media use and teen mental health.

Sen. Marsha Blackburn (R-Tenn.):

One thing is abundantly clear. Meta's record when it comes to protecting children online is indefensible. And these are not the only cases moving forward in federal court. Thousands of cases. Many is part of the multidistrict litigation involving families. State attorneys general and school districts detail just how deep the rot is at these companies. But these court cases alone are not enough because while courts can punish past harms, it is up to Congress to prevent future harm to our children. That's why Congress must pass my Kids Online Safety Act. The bill simply ensures that online platforms are designed with safety in mind for our nation's children. That's it. It is a simple bill and it's incredibly telling that social media companies have spent tens of millions of dollars to defeat KOSA and any other regulation to protect children online. In the first quarter of 2026, Meta and Google hired one lobbyist for every six members of Congress.

One lobbyist for every six members of Congress. Think about that. I want to call attention to the fact that there are two parents here with us who lost their children due to Big Tech's exploitation, while hundreds of Big Tech lobbyists who are actively working to stop KOSA and other child safety legislation hide in boardrooms across this city. Ms. Bogard, Ms. Norring are here to tell their stories and urge Congress to finally take some action and the American people are paying attention to this issue. I also want to take a moment to thank the parents that are here with us and in the audience today. Their stories are so compelling and their help in passing the Kids Online Safety Act is so appreciated and I know we're not going to stop until we get this to President Trump's desk this year. I look forward to the testimony and I recognize the ranking member.

Sen. Amy Klobuchar (D-Minn.):

Thank you very much, Chair Blackburn, for holding today's hearing and thank you to our witnesses for being here. You, Ms. Bogard. Thank you very much for being here. I'm sure this is difficult. And Bridgette Norring, who I've gotten to know very well, she's from Minnesota, and in your son's name, have just been an incredible advocate for other families both nationally and in our state. Thank you. So here's the issue. As the chairman explained, social media company algorithms are designed to keep people online as long as possible and collect information and then sell as many advertisements as possible. And sadly, all of that has also include kids and very young kids. According to a study, social media platforms generated 11 billion in revenue in 2022 from advertising directed at children and teenagers, including nearly two billion in ad profits derived from users age 12 and under.

To protect the profits, the companies often design their platforms in ways that actually increase teen addiction. And then of course, these platforms become prey to predators. People that are pushing sexual exploitation, people that are pushing harmful content from eating disorders and provide venues as we know in several cases for dealers to sell deadly drugs like fentanyl. One parent told me that her child's social media use was like a water faucet on full blast. The water was overflowing while she is sitting out there with a mop trying to wipe it up, trying to figure out how to get her child off of one platform, going to her eldest child to try to get advice on what to do when they find a new platform. And for so many parents like the two that are before us today to testify, there are permanent, tragic consequences. Bridgette lost her teenage son after he took a fentanyl laced pill that he believed was Percocet purchased through social media.

Joanne lost her teenage son after he tried the Blackout Challenge that he saw online. That's why parents, victims, and state attorneys general have been standing up to hold these platforms accountable and protect others from enduring the pain that they have gone through themselves. In the face of their grief, they're suing social media companies for the harms their platforms have caused. We've had two significant cases just recently in a Bellwether case that may shape how thousands of others are resolved. The California jury found Meta and YouTube were negligent in how they designed their platforms and liable for damaging the mental health of a young girl who frequently felt that she simply couldn't break away. The state of New Mexico won its case against Meta alleging that the company "Knowingly exposes children to the twin harms of sexual exploitation and mental health harm." $375 million in damages.

These courtroom victories are incredibly important, but they cannot be an excuse for complacency. We must continue to empower victims - the courtroom door. I've been a longtime supporter of after finally deciding that we wouldn't be able to pass a bunch of the laws I wanted to pass of getting rid of Section 230 or at least reforming it. And even with some of these victories, the companies have continued to block changes in Congress. Meta even used its power as a dominant provider of online advertising to hide legitimate advertisements by lawyers informing parents and kids of the harms of social media. Senator Cruz and I have teamed up and passed the Take It Down Act. We're literally coming up this next week on the time at which the platforms will be held accountable if they don't take either real images of non-consensual porn or AI-created images down.

We already have criminal prosecutions against those that have spread this trash that have been successful. Congress also needs to pass, and Senator Blackburn and I were together with Senator Blumenthal and Cruz yesterday with a group of parents. The Senate's version of the Kids Online Safety Act, KOSA, to ensure that the platforms design their products to prevent, mitigate harms. Senator Blumenthal and Blackburn's bill, it passed the Senate on a 91.3 vote last Congress and it needs to be taken up and passed immediately. There are vital issues to get right, but we cannot allow children to continue to be in harm's way every time they pick up a smartphone or tablet while we drag our feet on reform. It is time to stop talking about the problem and do something. I look forward to hearing about the witnesses. Thank you.

Sen. Marsha Blackburn (R-Tenn.):

Mr. Chairman.

Sen. Chuck Grassley (R-Kan.):

Thank you.

Sen. Marsha Blackburn (R-Tenn.):

You are recognized.

Sen. Chuck Grassley (R-Kan.):

Thank you, Chair Blackburn for holding this hearing. This is a very important hearing and I think with the vast audience we have proves the importance of the issue and you've been a leading voice in protecting children from social media harm. The recent verdicts against social media companies relating to addictive platforms makes very clear that Congress must take meaningful action to protect kids online and that meaningful action must include oversight and legislation. That's why I've introduced a multiple of bipartisan bills in this area, including Sentencing Accountability for Exploitation Act, the Ending Coercion of Children and Harm Online Act and the Stop Sextortion Act. I've done this with ranking member Durbin of the full judiciary committee. I've also joined Senator Graham in introducing a legislation that would repeal Section 230 immunity that big tech companies have had since 1996. These bipartisan bills aim to hold violent criminals accountable and combat online child exploitation.

February 19th, 2025 and December 9th, 2025, I held hearing of the full judiciary committee on child safety and digital area and protecting kids against online exploitation, and for over a year, myself and Senators Blackburn and Hawley have investigated Meta. On April 14th, '25 and April 16th, 2025, I wrote to Meta. In those letters, I raised questions about Meta's reported efforts to silence whistleblowers. Former Meta employees blew the whistle on Meta's employment agreements, the company's ties to China, potential violation of the Foreign Corrupt Practices Act and the company's alleged practices of targeting vulnerable teenagers. In those letters, I raised questions and made record public regarding Meta's use of targeted ads towards teenagers. And then on September 2nd of 2025 and September 10th of the same year, I along with Senators Blackburn and Hawley sent letters additionally to Meta. In those letters, we raised concern about the use of targeted advertisements, protecting teens on their platforms and compliance with the Federal Trade Commission's orders and the Child Children's Online Privacy Protective Act.

We also noted that whistleblower disclosures and public reporting concerns about the company's interaction with the Chinese Communist Party and the data privacy and security measures on WhatsApp. We raised additional questions about how Meta used their generative artificial intelligence platforms targeting kids. To date, Meta has failed to fully comply with their investigative demands. So on March 11th of this year, we sent Meta the following up letter. In that letter, we raised new concerns about public reporting and court filings stating that Meta misled the public about the risks associated with their products. However, Meta isn't the only company that must address how they're protecting our kids online. The public deserves to know how these companies are protecting kids from risks related to their platforms. This committee's efforts on these important matters will continue. Thank you, Chair Blackburn.

Sen. Marsha Blackburn (R-Tenn.):

Senator Durbin.

Sen. Richard J. Durbin (D-Ill.):

Madam Chair, I'm sorry that I'm late. There was another hearing upstairs and I'm going to ask my introductory statement to be placed in the record and say two things. Want to change this situation? Want to make it happen soon? Two things we can do. Repeal Section 230, number one. Number two, give every American family access to courts to enforce the protection of their children. You're going to see things happen dramatically if we do those two things. Thank you, Madam Chair.

Sen. Marsha Blackburn (R-Tenn.):

Thank you, Senator Durbin. And to our witnesses, Ms. Rachel Lanier is managing attorney of the Lanier Law Firm, a member of the trial team and served as co-counsel in the social media addiction lawsuit legal team that secured a $6 million verdict against Meta and YouTube. She focuses on holding social media companies accountable for their harmful addictive features, particularly those that impact vulnerable children and teens. Ms. Lanier has been named one of the 500 leading plaintiff consumer lawyers, best lawyers for mass tort and personal injury litigation and top 40 under 40 civil plaintiff lawyer by National Trial Lawyers. Ms. Joanne Bogard tragically lost her 15-year-old son, Mason, in 2019 to the choking challenge, a viral social media trend targeting young people online. As a mother and an employee of Indiana's second-largest school district, she pioneered the passage of Mason's Education Act, which was signed into Indiana state law in 2024.

Her advocacy in media literacy has helped schools implement the tools and resources to teach kids how to stay safe online. She is also a volunteer for Fair Place Screen Time Action Network and a member of Parents for Safe Online Spaces. Dr. Mary Graw Leary is a professor of law at the Catholic University of America, Columbus School of Law, where she directs its modern prosecution program. Dr. Leary is a former federal prosecutor and has worked on issues addressing the abuse and exploitation of women and children, child pornography, sex trafficking, technology and family violence. She serves as chair of the US Sentencing Commission's Victim Advocacy Group, was the former Deputy Director for the Office of Legal Counsel at NCMEC and the former director of the National Center for the Prosecution of Child Abuse. Ms. Bridgette Norring also comes before us today as a mother who tragically lost her 19-year-old son, Devin, in 2020, after he unknowingly purchased a prescription drug through Snapchat that contained fentanyl. She was in the room in 2025 when President Trump signed the Halt Fentanyl Act into law, which permanently classified fentanyl-related substances as schedule one drugs under the Control Substance Act. She continues to be a leading voice in combating the fentanyl crisis and advocating to protect children in the virtual space. I'd like to ask each of you to rise, raise your right hand. Let me swear you in. Do you swear or affirm that the statements you are about to give are the truth, the whole truth, and nothing but the truth, so help you God?

Panel:

I do.

Sen. Marsha Blackburn (R-Tenn.):

Thank you. All answered in the affirmative. All right, Ms. Lanier, we are coming to you first for your five-minute testimony.

Rachel Lanier:

Chairwoman Blackburn, Ranking Member Klobuchar, and distinguished members of the committee, thank you for the opportunity to testify today. Meta and YouTube built features into their platforms to work like slot machines, engineered to hijack the developing brains of children. For the first time in history, an American jury looked at two of the most powerful technology companies in the world and delivered a message, "What you've done to our children is unacceptable." I was in that courtroom and I'm here to tell you what the jury saw and why it matters. My name is Rachel Lanier. I'm a trial lawyer and I serve as managing attorney of our Los Angeles office. I tried the KGM versus Meta-Google case, the first social media addiction case ever decided by an American jury, alongside my own family members, my father, Mark Lanier, my sister Sarah Lanier, and our diligent trial team.

As a parent to four, including two teenagers, the evidence that I saw in that case keeps me up at night. First, children's brains are being hijacked and big tech designed it that way. Children are the future of America, and their brains are being changed by these companies, not for the better. Meta and others operate in what they call "the attention economy." Their entire business model depends on keeping your child's eyes on their apps as long as possible, because attention means money. Our client, Kaley, started using YouTube at age six and Instagram at age nine. By the time she was a teen, she was struggling with depression, anxiety, body dysmorphia, and suicidal ideation. She is not alone. She's one of millions. The evidence revealed platform features were engineered to tap into the brain's reward systems. Infinite scroll, removing natural stopping points, algorithmic feeds optimized for engagement, push notifications, time to reel children back in. Deliberate design choices built to maximize time on the app, because that means more advertising revenue and data harvesting. These platforms cause neurological harm to developing minds. The science supports it and so does the company's own internal research.

Second, the companies chose growth and profit over child safety. Mark Zuckerberg told this body that children under 13 are not allowed on his platforms. The evidence tells a very different story. Meta's own internal estimates showed over 4 million American children under 13 were on Instagram as of 2015, roughly 30% of every 10 through 12-year-old in this country. Other documents stated the goal, "If we want to win big with teens, we have to bring them in as tweens." Internal Meta documents showed employees describing Instagram as "like a drug," and the company as "basically pushers." Meta's own employees compared themselves to Big Tobacco. Internal Google documents compared their own products to casino slot machines. When employees and whistleblowers raised safety concerns, the answer from the top was clear, growth comes first. Third, this committee has the power to act, and how you act matters enormously. Our founders gave us the jury trial for exactly this moment. The courtroom is one of the most powerful tools we have to force accountability when there is wrongdoing. Brave families pursuing these cases forced these companies to hand over the evidence.

Any legislation must set a floor, not a ceiling. State laws, tort claims, and consumer protections that go further must be preserved. Do not let preemption language become the mechanism by which these companies escape accountability in a courtroom. Section 230 was written before any of these platforms existed and was never designed for the world we live in now. This committee should seriously consider a total repeal of Section 230, or at a minimum, carve out children and teens, carve out algorithmic designs. The First Amendment stands on its own. Free speech does not require addictive design.

Finally, the burden cannot fall on parents alone. Most parents are doing their absolute best, but they are moms and dads up against trillion-dollar companies who have entire teams dedicated to profit, growth, and engagement on their platforms. That's not a fair fight. I represent parents and families every day. I'm happy to serve as a resource for this committee in any way I possibly can. It's a privilege to testify before you. I'm so grateful for the work that you are doing on behalf of America's children. I welcome your questions.

Sen. Marsha Blackburn (R-Tenn.):

Thank you, Ms. Bogard?

Joann Bogard:

Chair Blackburn, Ranking Member Klobuchar, and subcommittee members, thank you for inviting me to share our story. My name is Joann Bogard. I'm a mother of three, a child online safety advocate, a founding member of Parents for Safe Online Spaces, and I live in Indiana with my husband, Steve. We have been blessed with almost 40 years of marriage and three beautiful children. Before retiring, Steve served our community as a firefighter and I worked at our public school system. Seven years ago, I made a promise to fight for change. I made that promise to my 15-year-old son, Mason, while he was on life support after he attempted a dangerous viral challenge that the YouTube algorithm fed to him unsolicited. It is why I have been advocating for the Kids Online Safety Act for over four years. This is my 14th trip to Capitol Hill with other survivor parents, telling the story of the worst day of our lives over and over in order to protect other kids.

I'm here today to ask Congress to finish the job and pass KOSA. Mason was our youngest of three. He was our creative kid, always taking things apart to create something new. He loved fishing, hiking, camping, playing his drums, and entertaining his friends and family with his witty humor. He was smart, funny, compassionate, and generous. He had great friends and went to a good school. At 15, he had just started his first job at a landscape business and was excited to start driver's ed. He wanted to join the Army after graduation.

May 1st, 2019, started as a normal day for our family with our typical routines of work, school, and dinner. That night, Mason gave his dad his typical hug, walked upstairs to take his shower and called to me, "I love you, mama." I replied, "I love you too, buddy." Those were our last words. A few minutes later, we would find Mason's lifeless body, not breathing, no heartbeat. My husband started CPR and got a pulse back. Mason spent a week on life support but never woke up. Our answer to what had happened was on his phone. A self-recorded video where he had tried a viral social media trend called the choking game, that the YouTube algorithm again had fed to him unsolicited. This is a trend where kids make themselves pass out, wake up, and post their seemingly funny videos, seeking those likes and clicks that in today's world are so important to make them feel accepted by their peers. For Mason and too many others, it went horribly wrong and we lost our sweet boy.

Since Mason died each week, I search for these choking challenge videos on social media. I find dozens within minutes and I report them, yet they're rarely removed. Tech companies' failure to act on the spread of dangerous viral challenges is just one of the ways that platform executives have repeatedly proven that they will not self-regulate in a manner that will consistently protect children. They give parents and young users and Congress a false sense of safety with promises of protections and safety measures. Research has shown that just 8 out of Meta's 47 promised parental tools and safety features actually work as advertised. I was at the Senate hearing in January 2024 when TikTok CEO Shou Zi Chew lied about TikTok having zero challenges on their platform. I still find them today. At the same hearing, Meta's CEO Mark Zuckerberg lied to senators, including some of you before me today, claiming to have no knowledge of a correlation between social media and mental health harms.

I was at the social media trial against Meta and Google in Los Angeles, where Mark Zuckerberg lied again about his knowledge of his product being harmful, and took no responsibility for decisions he made that put youth on Instagram at risk. Thankfully, a jury found Meta and Google liable and negligent on all charges. Comments are often made that it is the parents' responsibility to protect their children online. I am here today to say that even the best parenting skills can't fight these companies and their algorithms. I was the engaged parent who did everything that the experts advised, yet this harmful content still found its way into his phone. My experience, and the experience of Bridgette and the other survivor parents sitting behind me today, make it clear that parents cannot fight this alone.

So when people ask, "Where are the parents of these children?" I can tell you the answer, "We survivor parents are here on Capitol Hill, sitting through countless hearings and meeting with members of Congress. We are in the classrooms. We are in the town halls, starting foundations, knocking on Meta's door in Silicon Valley, rallying outside the Apple office, meeting with President Trump, and tomorrow we have a meeting with Speaker Johnson. That is where you will find us, fighting for your children, your grandchildren, because ours are gone and we don't want any other family to feel this pain." We have started a huge movement and we aren't going anywhere until it's finished. I'm asking every lawmaker to join us in this fight. Do what you do best, pass legislation to protect America's young people. I pray that this is the very last hearing for KOSA and that it moves forward quickly in the Senate and soon becomes law to honor the children who have died at the hands of these platforms, and begin protecting every child online.

Parents were glad to hear Senate Commerce Committee Chair Ted Cruz announce at our Mother's Day rally yesterday that he plans to mark up KOSA. We ask him to schedule that markup without delay. Thank you, Senator Blackburn and Senator Blumenthal, for being champions of this bill in the Senate, and to Ranking Member Klobuchar and the subcommittee for standing with the survivor parents and allowing me to share our story with you today.

Sen. Marsha Blackburn (R-Tenn.):

Thank you. Dr. Leary?

Mary Graw Leary:

Thank you, Senator Blackburn, Ranking Member Klobuchar, and thank you to all the parents in the room today. It's an honor as a professor who looks at these things from an academic standpoint to be with such brave and tremendous people. I just regret that we are all here. The title of today's hearing has embedded in it certain implications. First, that private law and public law complement each other to protect. Second, that is indeed the goal: to protect and to prevent harm, not simply to provide a remedy for victims after the harm has occurred. And finally, it also implies a question, do these verdicts demand Congress to act? And the answer is a resounding yes.

I first want to speak generally, and then specifically. Generally, there is a historic and fundamental obligation of Congress to protect. Alexander Hamilton asked in 1787, "Why has government been instituted at all?" And his answer was, "Because the passions of men will not conform to the dictates of reason and justice without constraint." The Supreme Court has noted, "The most basic function of any government is to provide for the security of the individual." And while undoubtedly you must balance these obligations with other constraints and obligation, there is no question that federal legislation plays an essential and unique role in protecting citizens from harm. In short, we don't leave it simply to victims to fend for themselves, particularly, as Ms. Lanier pointed out, when they are fighting an extraordinarily powerful industrial force.

There's a historical pattern here. When new industries emerge, there often is a time where government doesn't intervene, waiting to see what the harms are to balance the benefits of the new industry against the potential harms and to learn more. However, but as the industry grows and its potential to inflict harm in the public becomes more apparent, the public law, public statutes often respond with a legal framework that does two things: incentivizes protection and balances the advantage of that industry with a threat of harm to the public.

These take many forms, including but not limited to public welfare offenses, criminal offenses, regulations, civil remedies. In this specific space, however, I would suggest to the committee and to Congress that there's a particular need for Congress to act. First, because it is beyond dispute in the words of the Supreme Court that the government has a compelling interest to protecting "the physical and psychological well-being of children." And less often quoted, but equally as important, the Supreme Court has noted that "parents and others who have the primary responsibility for children's wellbeing are entitled," entitled, "to the support of laws designed to aid the discharge of that responsibility.

But at this point, Congress is part of the problem. Congress has not self-corrected the ecosystem that exists, which provides no deterrence to tech platforms to engage in dangerous actions and every incentive to do so. This committee is well aware and we've referenced the history of Section 230, but in 1996, Congress took this public law action seriously, saw the threat coming and provided a narrow, but important good Samaritan protection for companies to engage in that kind of incentive deterrent structure. But we all know what has happened, a concentrated effort by these tech companies through courtrooms across America to change that, not to its narrow protection, but to what I refer to as a de facto absolute immunity.

And today there is no question the connection between these harms. In 2024, 62.9 million images and videos and other files were reported to the cyber tip line. 546,000 reports concerning online enticement, a 192% increase. Other aspects of the government have acted. The FBI, the surgeon general, attorneys general from across the country. Today, it is my understanding that the Alliance to Counter Online Crime has released a report that ties Section 230 directly to a number of particular harms, including CSAM, human trafficking, drug related death, teen self-harms with exponential growth.

Knowing what we know, having this information before us, these trials have taken place, and it seems that these juries did not need more than a few weeks to do what Congress has not done in two decades and over 41 hearings. They waded through the mountain of evidence. They listened to the experts. They read through the internal documents. They allowed tech a full-throttle defense, and then swiftly and resolutely held these companies accountable. Congress should do the same by correcting Section 230 and enacting other public laws to address the more specific threats to children that have emerged. Thank you, and I welcome your questions.

Sen. Marsha Blackburn (R-Tenn.):

Ms. Norring, you're recognized.

Bridgette Norring:

Chair Blackburn, Ranking Member Klobuchar, and members of the subcommittee, thank you for the opportunity to testify at this important hearing. It is an honor and privilege to be before you today. My name is Bridgette Norring. I am a wife, mother, grandmother, advocate, Founder of the Devin J. Norring Foundation, and Co-founder of Parents RISE!. I'm proud of the work that we do, though I wish we didn't have to do it. Six years ago, my son died from fentanyl poisoning after Snapchat connected him to a drug dealer. Devin was only 19. He loved football, skateboarding, BMX riding, writing and creating his own music, and he was his siblings' greatest protector.

Prior to the start of the pandemic, he began suffering from migraines and dental pain. When critical medical appointments were canceled the first week of the lockdown, he turned to what he and so many other American children believed was a quick and safe solution. He turned to Snapchat, and Snapchat connected him with a local drug dealer selling counterfeit pills. One pill ended his life, while Snapchat let the dealer continue selling to children on its platform long after Devin died. More like two years after Devin had died. In the spring of 2021, myself and other families who had lost their children to Snapchat met with Snapchat executives, including Jennifer Stout, Senior VP Global Policy and Platform Operations. Jennifer Stout told us that as parents, we should have been monitoring our children better, and that because of Section 230, we could not sue Snapchat. And at first, we believed them. Then we watched as more kids died as Snapchat still would not take down known predators and dealers, and as they did not even warn families of the harms happening on their platform, even as we begged them to do so.

Section 230 is why these companies think that they have the right to trade in our children's lives, to trespass in our homes, and to put digital nicotine in their products. For years, we were ignored and countless more children died. Then things began to change. A Facebook whistleblower came forward. Attorneys collected just enough evidence to demand discovery, and these lawsuits are how we finally started to get the truth, the truth in their own words, the truth that we as parents already knew. I submitted examples of those unsealed records with my written testimony. Remember all the years these companies swore that they had done nothing wrong, that they were not designing addictive products and were doing their best to protect our children? It turns out none of that was true. One YouTube document says, quote, "Vision, we aspire to create an app that is addictive," end quote. A Meta employee wrote, quote, "Instagram is a drug. We're basically pushers," end quote. And another quote, "Child safety is an explicit non-goal this half," end quote.

Internal documents reveal that Meta was aware that it was recommending known children to known groomers and at nearly four times the rate it recommends children to non-groomer adults. How is that not unreasonably dangerous by design, and how is that not criminal? And yes, we are making progress, but it shouldn't take a years-long David and Goliath battle every time a corporate predator hurts a child. That is not justice. And tech companies shouldn't be allowed to continue to profit off of our misery and loss, especially now as we enter an even more dangerous era with AI. In fact, I just learned that while Senator Hawley has been trying to make clear that it is illegal for AI companies to allow for the sexual exploitation of children, through the Guard Act, the AI industry is trying to get harmful state laws passed like Colorado's HB-1263, which says that AI companies can allow for sexual abuse of a child as long as they can show that it was not technically feasible to avoid it.

Of course, it is the AI companies that get to determine what is and is not feasible. We are talking about the sexual abuse of children versus a product you get from app stores in the entertainment category. That any state could excuse the sexual exploitation of children just because AI is involved is crazy to me. It's like letting a pedophile off the hook because he says he did his best to avoid hurting a child. These companies can design systems that don't hurt, abuse, and manipulate children. They are just choosing not to in the name of maximizing their profits. This is what American families are up against. These companies hid behind Section 230 immunity, and now that we are making progress, they are cleverly pushing bills that require them to do virtually nothing, while allowing the abuse and manipulation of our children to continue in the name of innovation.

This isn't innovation, it's abuse. My family and thousands like mine have been sounding the alarm far too long. Some of you have stood strongly with us, and each day more lawmakers are finding the courage to look beyond political parties to truly fight for American families, safety by design, and corporate accountability. And now I am here today to tell Congress that it is time to choose. Congress must ensure that the Senate version of KOSA will not risk preemption of state and victims' rights, and then it must pass it. Congress must pass strong common-sense AI chatbot requirements like the Guard Act. Congress must also pass the Cooper Davis and Devin Norring Act, so that social media companies are required to report illicit drug activities occurring on their platforms to law enforcement. And Congress must absolutely reform Section 230.

And now that the courthouse doors are slowly opening, Congress must ensure that they stay that way for our families. Tech companies don't fear regulatory fines; those are just the cost of doing business, but they do fear accountability in court and they do fear discovery, and they sure do fear the truth being exposed publicly. I made a promise to Devin that his life would not be in vain. I have since extended that promise to the countless victims and families as I carry their loved ones with me on this painful journey, no mother should ever have to endure. This is not about politics. This is about whether our elected officials are willing to protect our children in a digital world that has evolved faster than our laws. And I'm asking Congress to stand with families and against the powerful companies that choose to harm American children by design and then lie to Congress and the world about what they'd done. You can no longer claim both. Thank you, and I look forward to your questions.

Sen. Marsha Blackburn (R-Tenn.):

Thank you, Ms. Norring. Thank you each for your testimony. Ms. Lanier, I want to start with you with the questions, and we'll each do a five-minute round of questions. So you sat in the second chair at the LA trial, correct?

Rachel Lanier:

Yes.

Sen. Marsha Blackburn (R-Tenn.):

So you had a front row seat?

Rachel Lanier:

I sure did.

Sen. Marsha Blackburn (R-Tenn.):

All right. I want to go into some of Mark Zuckerberg's testimony because I think one thing is very clear as you look at this: he just flat-out lied. And I think he did it over and over again. So let's walk through a few of the examples, because I think these examples show a pattern of willful disregard for the American public and for our children. And first, when he was before this committee in 2024, he said that, and I'm quoting him now, "The existing body of scientific work has not shown a cause or a link between using social media and young people having worse mental health outcomes." And the truth on this is that Meta had a document called, and I quote again, "Known Negative Effects of Facebook and/or Social Media in General on Teens," end quote.

Meta's document even noted some of those negative effects, including increased sleep disruption, anxiety, body image pressure and depression. So despite what he said under oath, he knew this and he was aware of what their virtual products were doing to children, but they did it anyway. So he just lied to us when he came before the committee for a sworn testimony. Is that correct?

Rachel Lanier:

Thank you so much for the question, Chairwoman Blackburn. In our trial, we did find that statements that Zuckerberg had made to Congress directly contradicted internal documents that had been top secret until the courtroom blasted them wide open. And one of the documents that you listed is unfortunately one of hundreds that showed that Meta internally knew that their platforms were causing tremendous mental health harm, including suicide, to young children and young minds in America. This is one example. And unfortunately, we confronted him time and time again, and he was very well prepped and tried very hard to dance around it and use his words to avoid accountability. But the jury saw it our way, which was that he can't shy away from his own internal company documents that showed, even in another document, one of the worst aspects of teens' relationship on the platform was time spent on the platform. It causes tremendous damage to teens.

Sen. Marsha Blackburn (R-Tenn.):

See, I don't think he was that well-prepped, because I think he thought he was going to go in there and he was going to win and people called out this litany of lies that Mark Zuckerberg has been telling the American people.

Okay, I want to go to another one. This was in '24 in some congressional testimony, and I'm quoting again his statement. Zuckerberg said, and I quote, "We don't allow people under age 13 on our service," end quote. Now, when you compare that to the internal Meta document that revealed ... So they knew this. This is their research, just so everybody is here, and I doubt we have somebody from Meta in the room today who wants to defend Meta on the lies. Compare that with their document that shows they had 4 million children under the age of 13 on Instagram alone in 2015, and that is 30% of all 10 to 12-year-olds in this country. In 2015. And we know it is even more than that now. And I think it's also worth noting that Meta wasn't even collecting birthdates until December 2019, so I think it's clear that Mr. Zuckerberg's testimony was a blatant lie. Is that correct?

Rachel Lanier:

Our jury certainly saw it that way, and so did we. Instagram didn't even start collecting ages for anybody creating an account until December of 2019. And for current users who already had an account, Instagram didn't even collect age data until 2021. So Instagram's own internal folks said, "Our lack of proactive action on detecting under-13 accounts undermines our credibility." And another person wrote, "The fact that we say we don't allow under 13s on our platforms yet have no way of enforcing it, is just indefensible." And the jury agreed.

Sen. Marsha Blackburn (R-Tenn.):

Thank you. Senator Klobuchar.

Sen. Amy Klobuchar (D-Minn.):

Thank you very much, Chair. I'll start with you, Ms. Norring. Thank you for being here today. When you lost your son, you said, "All of the hopes and dreams we as parents had for Devin were erased in the blink of an eye, and no mom should have to bury their kid." Can you tell us more about the hopes and dreams you had for his future and what it feels like to be here once again today?

Bridgette Norring:

So before Devin died, he had dreams, goals he had set to go to California that summer to look into future schools for his music production. He wanted to know everything about it. I was nervous about him going all the way across country, as any mother would, but those were his goals. Those were his dreams. He also worked full-time for Factory Motor Parts. He loved going in and doing his job. As a mother, I mean, we have so many dreams. I wanted to see my son get married. My daughter's getting married soon. He'll never see that. I have two grandbabies now. He'll never know them. They only know him through pictures. So I'll never get to see him have grandchildren or what he could have potentially grew into.

Sen. Amy Klobuchar (D-Minn.):

Thank you. What policy changes would you like us to make?

Bridgette Norring:

I would like to see the immunity taken away from big tech. So, Section 230 definitely needs to be reformed. There needs to be accountability, definitely giving parents access to the courtrooms, because that is the only way that they will stop doing what they're doing.

Sen. Amy Klobuchar (D-Minn.):

Thank you.

Bridgette Norring:

Thank you.

Sen. Amy Klobuchar (D-Minn.):

Ms. Lanier, thank you for explaining the harms and answering the questions that the Chair posed. What were the biggest challenges you faced in that landmark case in holding Meta and YouTube accountable for how they designed their platforms?

Rachel Lanier:

Thank you so much, Ranking Member Klobuchar, for the question. It was really an interesting case, because as you've heard and you've heard about some evidence, these companies, including Meta, targeted children to get them on the app, to keep them on the app as young as possible and as long as possible. And then, interestingly, the biggest challenge we faced in the courtroom was the flip side of that. The company wanted to target the children in the courtroom and the children's families and say, "It's not our fault. Blame the parents." Even though our mom, in our case, did everything she could to try and protect her child.

Sen. Amy Klobuchar (D-Minn.):

Very good. Thank you. And Professor, in your testimony, you discussed how deterrence is one of the best methods of prevention. What do you believe is the best way to ensure that the social media platforms are deterred from designing their platforms in this way?

Mary Graw Leary:

Thank you, Senator Klobuchar. I think that we understand the litigation is a deterrent, to be sure, but unfortunately in many situations it is after the harm, but it is a deterrent. But it has to happen in combination with public law with not only access to the courtroom, which we've been fighting for for so long and great attorneys have been able to make that happen, but also, as these new technologies emerge, public laws that are designed and targeted at them, things like the Kids Online Safety Act, things like the GUARD Act that are addressing simple specific actions for far too long ... Well, let's put it in context. These numbers for the verdicts were tremendous, but as the Senator pointed out, more has been spent in lobbying to prevent these laws taking effect than at least one of the verdicts. So, I think that both have to work together, just like every other industry in America who faces guidelines, deterrence if they act poorly, and reasons to act appropriately.

Sen. Amy Klobuchar (D-Minn.):

Just very quickly, I'll ask you back to you, Ms. Lanier, as well. This TAKE IT DOWN Act is actually, part of its taken effect already, but the part about having them take it down in 48 hours is going to be taking effect this coming week. Are you going to be watching for it? What do you see as ... What do you think is going to happen, and what do you think we should do if they don't do it? Or maybe more likely, what should you do? So, go ahead.

Rachel Lanier:

Thank you. I think the key for any legislation again is to allow the courtroom to be the last line of defense. So, any protective measures that are going to move us toward child safety, I generally support, of course. And if there's any language, we have to be so careful that it's not used as a way to avoid accountability in the courtroom, which is exactly what the defendants and the companies in these cases try to do time and time again.

Sen. Amy Klobuchar (D-Minn.):

I've seen a few bills like that. Yes. Do you want to add anything, Professor?

Mary Graw Leary:

Just quickly to say that yes, we will be watching them. And I think what's essential is that not only have the platforms blamed the parents, but they have also acted like it's the bad guys who are the source of this and they've got nothing to do with it. So, these bills that are targeting, like the TAKE IT DOWN Act, the very platforms that are facilitating it, those kinds of laws are essential.

Sen. Amy Klobuchar (D-Minn.):

Thank you.

Sen. Marsha Blackburn (R-Tenn.):

Senator Cornyn.

Sen. John Cornyn (R-Texas):

I assume that Meta is appealing the decision?

Rachel Lanier:

Absolutely.

Sen. John Cornyn (R-Texas):

Safe bet.

Rachel Lanier:

Yes, sir.

Sen. John Cornyn (R-Texas):

What is the basis for their appeal?

Rachel Lanier:

Everything under the sun. Section 230, even though our case focused on features, not content, but somehow everything in their platform is content. So, nothing can be a feature; it's all content. So Section 230 is a massive one. The amount of rulings our judge had to make, and she is so brilliant, time and time again on Section 230, would make anyone's head spin. So, that's a key issue. They also think that the verdict was too high, the punitives were too high, which is interesting, because we actually found them to be quite moderate against a multi-trillion dollar company. So, those are some of the grounds.

Sen. John Cornyn (R-Texas):

So, the basis, the theory upon which you pursued the case, the legal theory, was it common law or was it statutory?

Rachel Lanier:

It's a little bit of both and in a way, but in California state court, we were suing under California state law theories of negligence, theories of failure to warn, and trying to hold the company accountable that way.

Sen. John Cornyn (R-Texas):

Professor Leary, Section 230, of course, was enacted as you pointed out, 1996. It broadly protects online services from being held liable for transmitting information. In other words, for the content that they provide. But there are some statutory exceptions that have already been carved out. For example, this federal immunity generally will not apply to suits brought under federal criminal law, intellectual property law, or any state law consistent with Section 230, certain privacy laws applicable to electronic communications, or certain federal and state laws relating to sex trafficking. So, there's already been some carve-outs in Section 230, and obviously, we have been trying to wrestle with that provision for a long time now without much success, but is there a more targeted or surgical approach that you believe that Congress could take that would advance the cause we're talking about here today?

Mary Graw Leary:

Yes. I think the problem with carve outs is, as this Congress struggles with, the technology changed so rapidly that a piecemeal carve out system is just not going to work, and there isn't historical precedent for it. I think that Section 230 should be repealed, with the exception of the Good Samaritan provision. That should stay in the law, because that's really important. That explicitly does incentivize these platforms to remove material, and they will not face litigation for that. That should remain in the law, but the other aspects I think should be absolutely removed.

Sen. John Cornyn (R-Texas):

Ms. Bogard, do you say you're going to be meeting with the speaker of the House of Representatives?

Joann Bogard:

Yes, sir.

Sen. John Cornyn (R-Texas):

Well, in addition to KOSA, which I'm proud to have been a co-sponsor of, which Senator Blackburn led, we passed the Enforce Act, which was one that would ensure that offenders who use generative AI to produce and distribute its CSAM and obscenity are subject to the same statutory penalties as those who create or distribute other forms of child sexual abuse material. The Enforce Act passed the Senate unanimously in December, but has been parked over in the House of Representatives since that time. So I hope you will bring that up to the speaker when you meet with him soon.

Joann Bogard:

I will do my best.

Sen. John Cornyn (R-Texas):

Thank you.

Joann Bogard:

Thank you.

Sen. John Cornyn (R-Texas):

So, one reason why Congress essentially banned Chinese ownership of TikTok was because social media can also be used as a threat to national security matters. I remember after the terrible attacks on October the 7th by Hamas into Israel, killing innocent Israelis, that obviously a war ensued between Israel and Hamas and its sponsor, the Iranian regime. But TikTok in particular, I believe that, was documented as essentially producing and pushing propaganda, which basically was critical of Israel for defending itself against that attack. I might ask you, Ms. Lanier, that obviously some of these companies are domiciled here in the United States, but obviously, this is a global phenomenon. What concerns do you have about foreign or offshore ownership of some of these capabilities and our ability to regulate or deal with those?

Rachel Lanier:

The scary thing that we saw, Senator, is that these companies will only be as protective as the country forces them to be. So, no matter where they live, they want to skirt around the rules as much as they possibly can, and they will mince words and do as much as they can to make that happen. So, when these companies now are headquartered in America, it gives our country more ability to regulate the people here, the people who are operating the platforms. And I think that's really key, but it's also key for all of you in power to be able to pass the sorts of protections that we're talking about here today so that America's children can be protected.

Sen. Marsha Blackburn (R-Tenn.):

Senator Durbin?

Sen. Richard J. Durbin (D-Ill.):

Thanks, Madam Chair. Let me thank all the witnesses for being here. I know some of you have met with us before and continue to appear before Congress and make sure we hear the whole story, particularly those of you who have lost a loved one. Keeping the memory alive of that person is part of your effort as well, and you do that so effectively. It was a little over two years ago. We had a hearing in this committee, the Senate Judiciary Committee. I was the chair of the committee, and Senator Grassley and Senator Graham representing the Republican side of the aisle, and we agreed on a bipartisan basis that we were going to issue subpoenas to the CEOs of the major companies. A lot of people said, "You're wasting your time. They'll never show up." They did. The CEOs of Discord, Twitter, TikTok, Snapchat, and Meta appeared before us with sworn testimony, and they were saying things that are still being quoted today, obviously were not true.

There were some graphic, emotional moments. I remember when Senator Hawley had Mr. Zuckerberg turn to the audience and apologize. I've never seen that happen in a hearing before. The point I'm making to you is, you have taken the time to come here and help us and those following this hearing to hear your side of the story. I think it's time for us on a bipartisan basis to call these CEOs back and to ask them what's happened in two years, to talk to them about the losses that have occurred and ask them what they're doing. I don't expect straight answers, Mrs. Lanier; you didn't get them in court either, but that confrontation on a public basis is a way to inform the public of the danger of what we're talking about. Holding them personally accountable under oath, as you testified under oath, as to what they say I think is critical. Mrs. Lanier, you talked about what happened in court when you were pursuing your case. I take it Mr. Zuckerberg was a witness?

Rachel Lanier:

Absolutely.

Sen. Richard J. Durbin (D-Ill.):

How long was he on the stand?

Rachel Lanier:

We were restricted to have him on for one day, because he's very busy.

Sen. Richard J. Durbin (D-Ill.):

Well, he may be very busy, but your day paid off. I thank you for your efforts in that regard.

Rachel Lanier:

Thank you.

Sen. Richard J. Durbin (D-Ill.):

And let me ask you, Mrs. Bogard, what have you found of other parents who've gone through similar tragedies as yourself? Have you been able to reach out to them and talk?

Joann Bogard:

Talk to them about?

Sen. Richard J. Durbin (D-Ill.):

About their losses.

Joann Bogard:

Talk about their losses? Absolutely. I have hundreds of parents who reach out to me, and the parents sitting with me here today and the parents who couldn't be here today, we all advocate together. We work together to put education in schools. All of these parents have those losses that Bridget described so eloquently, that we will never see these dreams we had for our children. When I talk to these parents about their losses, it's the same across the board.

Just since Mason died seven years ago, I have had 104 parents reach out to me saying that their child has died from the choking challenge. That's one harm, one challenge, just the choking challenge; 104 more kids have died. Some of them are here with us today. We have got to do something. Agreeably, across the board, we all agree something needs to be done, and I don't think this can be fixed with ... There's not one big fix for this issue. We need litigation. We need litigation. We need legislation. We need education. But putting legislation in place is such a huge first step that we can build upon.

Sen. Richard J. Durbin (D-Ill.):

Well, I want to join with Senator Cornyn, who had to step away here, in encouraging you in your meeting with the speaker to ask him to make a priority on this issue; he can make a difference. And we need to do our part here. We haven't taken this issue to the floor yet. We should. It's time for us to bring it to the floor and have a fulsome debate on it and vote on it. Before we have another hearing to discuss it, let's do something about it on a bipartisan basis. This has been a bipartisan issue from the start. We want it to continue to be. And so, I made that proposal before and I'll make it again. My proposals are number one, get it to the floor, get a debate, get a vote. And secondly, call the CEOs back under oath, hold them accountable again. Thank you, Madam Chair.

Sen. Marsha Blackburn (R-Tenn.):

Thank you, Senator. And I agree with you. It is time to take it to the floor and pass it. Certainly do. Senator Moody, you're recognized.

Sen. Ashley Moody (R-Fla.):

Thank you. I was the Attorney General of Florida before I became a United States Senator, and I thought the biggest challenge for me becoming the Attorney General as a mother still having a kid in school at these very, I believe, fragile ages. I thought that was going to be the biggest challenge, but it ended up being the biggest driver to be still dealing with what I call the backpack issues while I was in a place to do something about it became one of my biggest drivers and inspirations and drove me every single day, because we're living through a period of technological change and innovations that once took generations to reshape society. How we all navigate that as parents, they now emerge almost overnight.

Our children are on the front lines of these changes before the risks are even fully understood, especially by those of us dealing with it every day. And we've seen this pattern before. Industries move faster than policymakers, technology outpaces oversight. And by the time the public and even lawmakers fully understand the consequences, an entire generation of children has already been irreversibly exposed to harm. The problem becomes even more troubling when companies are not merely aware of risk to children, but are actively designing their products to maximize engagement, dependency, and despicably profit. America's children are not piggy banks for big tech to shake down to satisfy their next quarterly earnings call.

And that is why, as attorney general, as the top enforcement officer in Florida, I fought back in court to end these abusive practices. We brought suit. Specifically, we argued that Meta intentionally designed features to keep children and teenagers compulsively engaged and addicted to its platforms. The response to that litigation, like Florida's, that I'm seeing all across this nation, is incredibly disturbing. They try to get cases dismissed by arguing that many of the features keeping children hooked on social media, like endless scrolling, autoplay recommended algorithms, incessant notifications, implicate protected free speech and editorial functions under the First Amendment in Section 230. In other words, they don't only want to keep doing these obviously harmful practices; they don't want anyone to even second-guess that, and they argue that they have the legal right to continue harming kids. I want to repeat that. They argue they are legally entitled to addict and harm our children, and we can do nothing about it.

They don't want parents. They definitely don't want us lawmakers thinking, "Well, maybe these platforms don't have the best interests of our children in mind. Maybe we ought to make sure that they are protected." And what has become abundantly clear is that the private industry cannot be relied upon to prioritize the safety of children when those protections conflict with the bottom line. So, it's obvious we have to do something to pass common-sense legislation, because I keep hearing the word immunity. And let me just say, I showed up today and it didn't even hit me until I looked at this table and talk about emblematic moms on a mission. I mean, thank you for being here today. It breaks my heart that you're coming here after the tragic things that happened to your family, the loss of your sons, but thank you for standing up and speaking out and saying, "This can't be. Our courts can't be the catchall because we don't get it right here."

And I've heard terms like immunity. I used that term yesterday. Stop saying Section 230. They're using it as a shield. They're using it as a get out of jail free card. They're using it for immunity. And I heard join us in this fight. That term has been used; join us in this fight. If you're not going to join us in this fight, just get out of our way. Stop being a shield. Any lawmaker that doesn't demand that KOSA be brought to the floor for a vote and that we pass it, they're standing in the way between us and protecting our kids. They're acting as a shield for these companies that are putting profit over protection.

I would like to know if you can tell us, Ms. Lanier, first, most people assume, many parents assume that people are getting to our children because the children are seeking out actively these things. And I think we're hearing today that imagine if KOSA's duty for care had been in place in the two years following the choking challenge. Imagine what the parents have had to deal with that came after you if they had had that duty of care in place. So, can you tell briefly to the committee how these algorithms are getting pushed to our kids, as opposed to what many people believe they're going out and seeking these things?

Rachel Lanier:

Absolutely. Thank you, Senator Moody. These algorithms, a good analogy that one of the whistleblowers in our trial, Mr. Boland, who actually has also testified here, he told the jury, it fits very well here. Imagine going into a bookstore and you pick up a book and then you set it down and you pick up another book. That's normal, and you see different sections of books and that's that. What the algorithm does is so different and so creepy. The algorithm is watching our children watching it. So, what happens is, is imagine with an algorithm, you go into a bookstore, you pick up the first book, and it has to do with rainbows and the color blue and what does that mean. And if you look at it for too long, all of a sudden, all of the books behind it change in the bookstore, and they tailor themselves to be something that it thinks you want to see, because these algorithms are made to keep people on the apps, especially children. And children who have vulnerable brains don't have the self-regulation skills to get out once they're pulled in. It's really hard for them.

So, the algorithm sees what they like and shows them more, or sees what keeps them there and shows them more. Even when the algorithm is showing them something scary or something they don't like, the algorithm is designed to keep them there. The algorithm has no morals. The algorithm doesn't care. The algorithm is made to keep them there, and it's horrifying.

Sen. Marsha Blackburn (R-Tenn.):

Thank you, Senator Moody. Senator Blumenthal.

Sen. Richard Blumenthal (D-Conn.):

Thank you. Thank you all for being here. This testimony is very, very powerful. As I said yesterday in our Mother's Day rally, never doubt that you are making a difference, and your call for action, not chocolates or flowers for Mother's Day, I think, was moving beyond words. And let me just say, when you meet tomorrow or whenever with Speaker Johnson, don't just ask him for a law, ask him for KOSA, the Kids Online Safety Act, not the version that's come out of the House Commerce on Energy Committee, but the version that passed the United States Senate last session by a vote of 91 to three. That ain't happening that often in the United States Senate, and we're going to do it again in the United States Senate, and that's what we want them to pass in the House, not the watered-down shadow legislation that they are thinking about doing, because they are under the sway of the armies of lawyers and lobbyists that big tech has mustered against them.

I sued the tobacco companies. I led the Attorneys General help to lead that effort, and there are remarkable similarities here. A product that kills people, executives denies their product doing any harm, documents that show they know what their products are doing, and continued resistance to reform. And the question is, what will the courts do about it? In the case of tobacco, we got not only money, but we got reforms in advertising and structure. And let me just come right to the point here. Let's focus on the Kids Online Safety Act, because that is the remedy we need. We don't need to eliminate Section 230. I'm in favor of eliminating Section 230. I advocated eliminating Section 230 literally, I'm embarrassed to tell you, probably close to 30 years ago, and people said, "You're crazy." They're not saying you're crazy anymore, because they see Section 230 has outlived its purpose, but the Kids Online Safety Act is about product design.

If you buy a car that has a defective steering wheel, or you buy a space heater for your home that blows up when you turn it on, nobody says there's First Amendment protection for it, because they're just expressing themselves, or Section 230 somehow protects them. This is about product design, and the Kids Online Safety Act doesn't involve eliminating Section 230. So, don't let Speaker Johnson or anybody else confuse you. You don't need to take on Section 230, but we will, we should, and eventually we'll win. I want to call attention to some of your excellent cross-examination, Ms. Lanier. You had not only Mark Zuckerberg on the stand, but you also had Adam Mosseri. He's the head of Instagram, correct?

Rachel Lanier:

Yes.

Sen. Richard Blumenthal (D-Conn.):

And you asked Mark Zuckerberg whether Instagram was addictive and whether the company any longer sought to maximize time spent on the app, and he denied it, right?

Rachel Lanier:

Yes.

Sen. Richard Blumenthal (D-Conn.):

And then you showed him internal documents that in effect demonstrated he was lying, right?

Rachel Lanier:

The amount of dancing that was done, you would have thought Zuckerberg was a ballroom dancer.

Sen. Richard Blumenthal (D-Conn.):

And then you showed Mosseri the same kinds of documents after he made the same kinds of denials, documents that showed they were actually concerned that they were continuing to keep kids so that they retained people on their sites. More eyeballs means more money, more advertising revenue, and he denied it as well, correct?

Rachel Lanier:

Right.

Sen. Richard Blumenthal (D-Conn.):

So, maybe it would make sense for some enterprising law enforcer, like a district attorney, to look into whether they told the truth and maybe whether they failed to tell the truth under oath, correct?

Rachel Lanier:

It seems like a reasonable approach to me.

Sen. Richard Blumenthal (D-Conn.):

I agree totally with my colleague, Senator Durbin, that those executives ought to be called back before us, and they ought to be asked about that testimony under oath in the California courtroom. And I think we ought to give a lot of credit to some of the whistleblowers. Francis Algin came to the subcommittee that Chairman Blackburn and I headed and spoke truth to power. She was one of the first. She came with documents. The documents are vital to these cases. I want to thank Senator Blackburn for her leadership. We have been steadfast partners in this effort. It's an example of bipartisan cooperation. If anybody says to you, "Well, there's no more bipartisan cooperation." This is an example of how it works, likewise with Senator Hawley and I, the GUARD Act. Senator Britt has been a real advocate, as have others on our side. Senator Klobuchar has been a leader.

Senator Graham and I have legislation on Section 230. If you want to hear about the evils of Section 230, just buttonhole Senator Graham. But you have brought out ... Let me just end with this. I apologize, Madam Chair. You've brought out the best in the United States Senate. I hope you will bring out the best in the United States Congress. We need the House to act on the Kids Online Safety Act. Thank you.

Sen. Marsha Blackburn (R-Tenn.):

Thank you, Senator Britt, you're recognized.

Sen. Katie Boyd Britt (R-Ala.):

Thank you, Madam Chairwoman. I want to begin where Senator Blumenthal left off, and that is first saying thank you to the two of you. There has been no more tireless advocate for children online, their safety, their protection than you. Senator Blackburn, alongside Senator Blumenthal, the work that you've done in this area, particularly on KOSA, I want to urge our House colleagues to pass the Senate version. We need to do that now. America deserves better. We can produce that, and to Senator Blumenthal's point, that means we have to work together and I certainly hope that the House will follow our lead on that.

To the parents here, thank you for sharing your story. Thank you for being so brave and courageous. Ms. Bogard, you said you have testified over 14 times here. When you just said the last words you said to your son, "I love you. I love you too." I think every parent up here envisions that happening in their very home. We used to lock our doors at night and believed we had kept our children safe, and now we do that, but yet the enemy is in the palm of our kids' hands. And so, thank you for telling your story. Mrs. Nogoring, thank you so much for telling yours. I think you bring out both what is happening online and the effects that COVID had with the shutdown and the schools and all of the things that occurred. And so, I appreciate you continuing to tell your story to all of the parents out there.

I had an opportunity to tell Megan and Saul this today, but you are what is pushing this, your story, honoring your children. That's what's making a difference here. Senator Holly and I have talked about this countless numbers of times, but you are the fuel and the momentum that's actually going to get something done, so thank you so much.

I'd like to start with you, Ms. Lanier. Outstanding job. Wow. Thank you. On behalf of all of us, as a mom of two teenagers, you got right in the fight and got real results. I think we have a lot of parents out there though that don't actually know the harms of social media. Can you take just a brief second? Because what you uncovered, I think every parent needs to know that these are actually designed to addict our children. Will you talk briefly, if you were to have a 30-second PSA to all parents in America, what would you want them to know about these social media platforms?

Rachel Lanier:

Thank you, Senator Britt. I would say that what you just said hit the nail on the head: that the access that these companies have to our children is frightening. They are able to access them in the safety of a child's home and addict them to the platforms. And what's so scary is that the companies will tell parents and Americans and tell all of you one thing, and their platforms are doing something completely different. They will say, "We have tools. We have tools. We can help people."

Sen. Katie Boyd Britt (R-Ala.):

And what you uncovered that the earlier that they know, the earlier they get our children addicted, then the more likely they are to be a lifetime user and the more likely they are to stay on there, and the longer they, stay on there, then what? The more money they make. Is that right?

Rachel Lanier:

Exactly.

Sen. Katie Boyd Britt (R-Ala.):

So, they are putting people behind their profits, and in this case, these people are our most vulnerable and our greatest asset, that's our children. It's disgusting. Professor Leary, I'd like to ask you after the verdict that she so beautifully helped deliver. We've had a lot of people say, "Well, that's it. We don't actually need anything." Now we've seen that there is a remedy. There is a remedy." I heard you say earlier, "There can't just be a remedy. There has to be protection and prevention." Talk to me about what the pitfalls are in that line of thinking and what you also think needs to be done.

Mary Graw Leary:

Thank you. Yes, that is a dangerous thought to think that after, and let's be clear, and all of you have alluded to it, decades of attorneys, state's attorneys generals trying to pierce that wall of Section 230, not being able to enforce their own state laws, not being able to hold a company liable for actually receiving child sexual abuse material. The list goes on. Creative and skilled attorneys like Ms. Lanier and many of the folks, the Attorney General of New Mexico, the fact that they managed to get through once or twice, and hopefully more, is not the solution. And some reasons why it is not the only solution. It's important. But why?

One is the inability to be preventive. And we see this week in New Mexico from the media reports that there has been not only pushback from the defendants that this state court judge does not have the power to do some of the things that are being asked of it. And the state court judge himself has expressed a concern. He said, "I am not a legislator, so that's a limit." And then, the other limit is financial. $300 million is a lot of money to most people. It's not to a $400 billion company. So, the idea that that will change the ecosystem and incentivize won't be the only answer.

Both these things, civil, private rights of action and affirmative legislation together, are what creates prevention.

Sen. Katie Boyd Britt (R-Ala.):

Well, the time to act is now. I have some follow-up questions. I'll submit those for the record and look forward to working with all of you to actually achieve results. And thank you again for telling your story.

Mary Graw Leary:

Thank you.

Sen. Marsha Blackburn (R-Tenn.):

Senator Coons, you're recognized.

Sen. Christopher A. Coons (D-Del.):

Thank you, Chair Blackburn. Thank you, Ranking Member Klobuchar. And thank you to all my colleagues who've worked so hard to advance the Kids Online Safety Act, to advance the Guard Act, to advance the Enforce Act. We've got a great group of co-sponsors here. We've had some great votes, but none of them have reached the President's desk. So please, and I know this seems hard thing to ask, keep at it.

As the father of three kids who survived social media and made it to their late 20s, I had a heavy heart listening to your story about losing your son, Devin, and about losing your son, Mason. The idea that serving up connections to fentanyl dealers or to a choking challenge is just ordinary business and protected behind a shield created by this Congress is something I have a hard time living with. Thank you for taking from your loss and turning it into a positive path forward for progress.

There are a lot of other parents here with us today. Could you please just raise up the photos of your children that I see so many of you holding? Please take a moment, if you could, and look at those faces, Senator Klobuchar and Senator Blackburn. Those are the faces that are the reminder of why you're here and why we should be here. Thank you, and thank you for sharing from your losses for us.

Senator Blumenthal shared that as Attorney General, he was part of the litigation that took many, many years to get Big Tobacco to finally come forward, not willingly, with the information about how harmful and dangerous their product was. What was striking about your testimony today was the massive gap between what the tech companies already know about the effects of their products and how little the general public and parents know and how hard it is to get that information.