AI is McDonald’s. That Will Be Good, Bad, and Catalytic for Civil Rights Advocacy.

David Brody / Feb 23, 2026

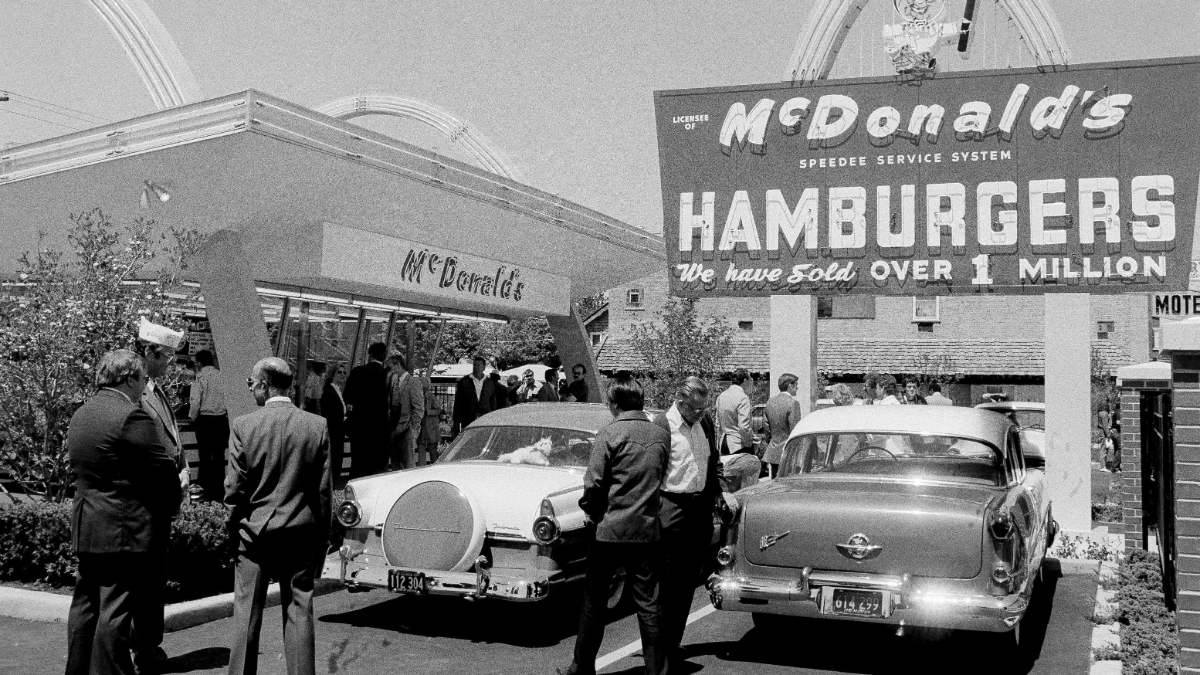

The McDonald's Museum, complete with automobiles from the 1950s in the parking area, was opened in suburban Des Plaines, Ill., on the site of McDonald's founder Ray Kroc's original store, May 21, 1985. (AP Photo/Charles Knoblock)

If your media consumption is anything like mine, a lot of the conversation you see about AI goes something like: “Look at how amazing it is! This will change everything, including disrupting every job.” Followed by: “That’s nice for coding, but AI still rampantly makes stuff up and is anti-creative. Plus, all these societal harms. This is just Silicon Valley hype.”

Both perspectives are right, and the people that hold them often talk past each other. While chatbots have a lot of problems and tech CEOs promise pie-in-the-sky fantasies, we should not lose sight of the ways in which AI productivity tools can serve our communities. AI will be transformative in ways we cannot yet divine, both good and bad.

As it stands today, AI is McDonald’s. We need to make AI better than McDonald’s.

Before the advent of cheap fast food, most people’s eating options consisted of either cooking at home, street vendors, or comparatively expensive and slow restaurants. (Yes, this is an oversimplification; I beg the historians’ forgiveness.) Dining out was largely a luxury good and not a routine experience. McDonald’s made dining out cheap and fast, which made a previously unattainable good attainable to the general public, particularly families.

The flipside, of course, is that the food is low-quality, unhealthy, possibly addictive, and carries environmental externalities. Sound familiar? McDonald’s also exploited low-wage labor, like how AI companies use overseas data annotators and content moderators. Fast food conglomerates disrupted local markets in ways that suppressed small competitors and engaged in regulatory capture to mask their true costs. But McDonald’s and fast food were still transformative for the eating options of everyday people, which in turn shifted behaviors and social patterns. For example, market substitutes for household labor, like fast food, made it easier for women to enter the workforce.

The current trajectory of AI may have similar effects on how people engage with computers as fast food has on how we engage with eating. Or fast fashion, or particle board furniture, or any other previously high-cost good revolutionized by mass production. AI makes custom computing and software development cheap and accessible enough to mass produce. While not everyone wants to vibe code, LLM chats are a novel user interface that many non-techies find useful for knowledge work such as quickly making a spreadsheet, organizing files, or designing a report. (Just remember to check the data.)

AI will make certain types of work, hobbies, and lifestyles more egalitarian and accessible—but, like McDonald’s, at lower quality than what we currently expect from artisanal knowledge work. It will also undermine the economics of disrupted industries. Will your Ikea table break after a few years while your grandmother’s table is rock solid? Yes, but you can furnish your whole home at Ikea for the price of one heirloom table. Like many types of mass produced products, AI outputs are cheap, fast, and brittle. (Whether it stays fast, cheap, or brittle remains to be seen.)

Advocates need to tailor their approaches—and their criticisms—to different modalities of AI. Too often when people debate the merits of AI, they are really talking only about LLMs used to chat or create synthetic media, but many other technologies fall under the umbrella of “AI,” and LLMs themselves have uses beyond content generation. Chatbots have different pros and cons from AI tools that function more like, well, tools.

When functioning as a more accessible user interface, AI tools allow people to do things they otherwise could not, whether because they lack resources, skills, access, or time. For advocates for low-income and marginalized groups, even a flawed tool that can compensate for manifestations of inequality is something worth careful consideration. In many everyday situations where the stakes are low or precision is not required, particularly if the alternative is nothing, AI being good enough is good enough. To a low-income entrepreneur or nonprofit director who wants to look professional and cannot afford a web or graphic designer, it is not always constructive to lambast how much electricity ChatGPT uses to hallucinate. It is like criticizing someone in a food desert for eating at McDonald’s too much. We have to solve the food desert holistically, including requiring corporate actors to carry their share of the burden, but in the meantime we recognize that people are going to make the best choices they can given their situation.

For advocates for civil rights and social justice, we want to envision, create, and capture the potential upsides of AI for everyone while properly addressing the costs, harms, and challenges. We want the future of this technology to be determined democratically to favor the best interests of the public, not just a small handful of billionaires. AI companies should not profit from these tools while offloading the economic, environmental, and other costs onto the rest of us–particularly externalities dumped on low-income communities and communities of color. And we currently lack adequate infrastructure to audit these systems to surface deep-rooted issues like bias. But focusing too narrowly on the downsides carries its own opportunity cost: it fails to anticipate what could be coming next and helping shape its arrival.

One part of the immediate challenge is getting AI to work for our communities. Here is a sample of questions civil rights and tech policy advocates are weighing:

- What kinds of AI tools do organizers want in their toolkit? Are there technical barriers and friction points in labor or movement organizing that AI could help circumvent, like building apps to help people protect their neighbors or overcome censorship?

- People of color and women are underrepresented in tech. If vibe coding reduces educational or training barriers, could that expand representation in the talent pool and lead to new innovations from different voices? How can AI productivity tools support entrepreneurship by groups who face disproportionate challenges accessing capital and starting businesses?

- We know there are huge risks of AI exacerbating discrimination in hiring and housing. Beyond just stopping discriminatory AI, what would algorithmic decision systems that affirmatively enhance equal opportunity look like?

- How can democracy advocates use AI to reach, educate, register, and turn out voters? Not just generating political ads but collecting and analyzing electoral data to inform strategy. How can AI unlock capabilities previously reserved for expensive political consultants and party machines?

- How do communities want AI to serve their needs? For those lacking affordable or accessible healthcare, what types of health tools do they want? What types of financial tools do low-income families want? How do we build tools that empower, not exploit?

- We know that Big Law will have the best AI legal tools at its disposal. But what tools could help a public defender manage a giant caseload or conduct investigations they otherwise could not? How can defense attorneys use AI to challenge unreliable forensic evidence and wrongful convictions?

- How are civil rights advocates using AI to investigate hate crimes and civil rights violations when law enforcement will not? The world watched the killings of Renee Good and Alex Pretti in Minneapolis through myriad cameras, but not all victims have such visibility. How can AI open source investigatory tools bring truth to light?

A valid response to some of these questions might be, “It should not be like this. People should have equal access to healthcare, jobs, representation, and general social mobility as a baseline. Fix it with public policy, not AI.” That is fair.

There are important first steps legislators can take, such as enacting the AI Civil Rights Act—which is endorsed by over 80 civil rights, civil liberties, consumer, privacy, and labor organizations—comprehensive consumer privacy legislation like the American Data Privacy and Protection Act of 2022 (ADPPA), and government surveillance legislation like the Fourth Amendment is Not For Sale Act of 2024 (FAINFSA). If Congress had passed ADPPA or FAINFSA, it would not be as easy for ICE and CBP to use many of the AI-enabled commercial surveillance tools they are currently using to terrorize immigrant communities. Together, these three bills would establish basic guardrails: AI that is safe, non-discriminatory, accountable, and privacy protective.

And yet, and yet—as inequity and iniquity flourish in real time, we cannot afford to make the perfect the enemy of the good. We have an unequal society and we have a potentially transformative new set of technologies, despite the flaws. If major reforms remain infeasible and we can use AI for harm reduction, that is still a net positive interim step. We need a “both, and” strategy.

McDonald’s was not the end of the story. Today we have more diverse options for quick dining, and if you want healthy, ethical, and eco-friendly options, you can find them in most places. Some communities block fast food franchises to support local businesses instead. Those options exist because people created demand for them. We can also demand AI regulations and AI tools that serve our communities. We can move beyond McDonald’s.